Issue #16: OpenRouter vs Ollama vs Hugging Face. Here’s What To Use.

TL;DR Most teams are overwhelmed by AI tooling. OpenRouter is your multi-model API gateway. Hugging Face is the model universe. Ollama runs models locally. Ollama

TL;DR Most teams are overwhelmed by AI tooling. OpenRouter is your multi-model API gateway. Hugging Face is the model universe. Ollama runs models locally. Ollama

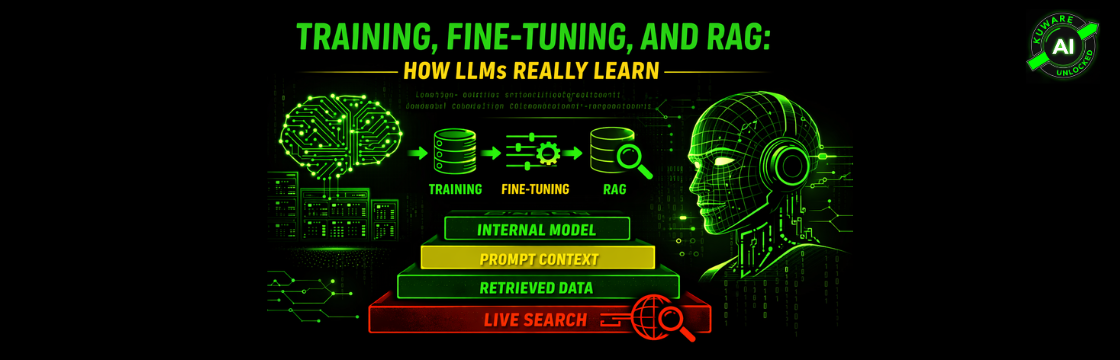

For businesses seeking AI leverage, it is crucial to understand the difference between Training, Fine-Tuning, and RAG. Training builds a model’s brain from zero, which is costly. Fine-tuning adjusts a pre-trained model with proprietary data. Most businesses should start with RAG (Retrieval-Augmented Generation), which injects fresh, company-specific knowledge at runtime without changing the model’s core weights, offering faster iteration and higher ROI.

The 2017 Transformer architecture, introducing the ‘Attention’ mechanism (Q, K, V), revolutionized AI by enabling parallel processing, replacing slow, sequential RNNs. Despite powering all modern models, its quadratic scaling (O(n²)) faces a “Quadratic Crisis.” The next AI pivot is toward ‘Selection,’ driven by linear-scaling models like Mamba, emphasizing intelligent forgetting to overcome memory and data bottlenecks.

Large Language Models suffer from “catastrophic forgetting” when fine-tuned, a phenomenon the author calls digital amnesia. The article explains the underlying mechanics (gradient conflict, representational drift) and the danger of loss landscape flattening. It advocates for Parameter-Efficient Fine-Tuning (PEFT) techniques like LoRA and QLoRA to specialize LLMs efficiently while preserving their core knowledge and preventing data loss.

RAG (Retrieval-Augmented Generation) is now the mandatory architecture for trustworthy enterprise AI. It addresses the fundamental weaknesses of LLMs, hallucinations, frozen knowledge, and opacity, by separating knowledge from reasoning. RAG systems ensure traceable, auditable, and grounded intelligence, becoming the new standard for mission-critical production environments in fields like healthcare and legal research.

TL;DR Most companies misunderstand how LLMs learn. Training, fine-tuning, and RAG are not interchangeable. Fine-tuning can cause catastrophic forgetting. RAG is now enterprise default architecture.

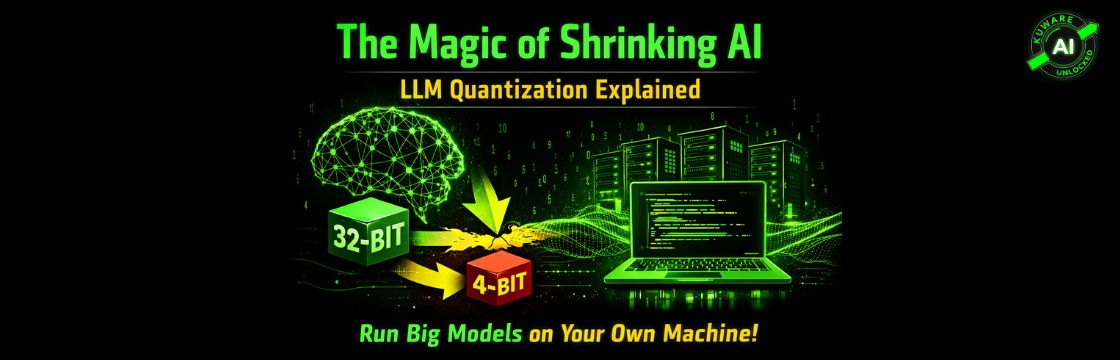

Quantization is the key to running huge Large Language Models (LLMs) on personal devices. It works by reducing the precision of model weights, dramatically shrinking file size (e.g., a 70B model from 280GB to ~40GB with Q4_K_M) while preserving utility. This practical guide explains the process, formats like GGUF, and the balance between fidelity and size, making local, private AI accessible to all.

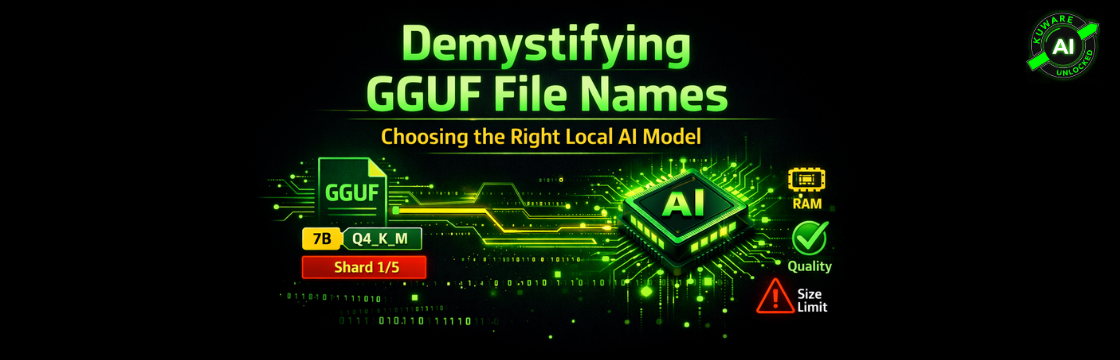

This guide demystifies GGUF filenames for local AI users. It explains how components like model name, parameter count, and quantization (e.g., Q4_K_M) reveal a model’s size, quality, and hardware demands. Understanding this standardized naming convention, created by the llama.cpp project, is essential for choosing an efficient model without guesswork, ensuring a smooth local AI experience.

The 2026 Architect’s Guide details the shift to local AI, emphasizing that VRAM capacity is critical for running models, while compute speed determines response time. It contrasts the Mac’s unified memory for large model capacity, simplicity, and silence, with the PC’s discrete VRAM and NVIDIA Blackwell’s raw throughput advantage, especially with native FP4. The choice, Mac or PC, is an architectural decision based on your model’s specific needs.

Choosing the right computer for local AI and LLMs is primarily about memory, not raw CPU speed. LLMs are memory-bandwidth bound. The guide recommends a MacBook Pro (64 GB unified memory minimum) for portability or a Mac Studio (64 GB unified memory) as a dedicated, desk-bound AI lab. Quantization (Q4_K_M) makes local LLM work possible, and prioritizing memory over the newest chip is key to avoiding slow, unpredictable performance.