Everyone’s building agents right now.

And honestly… most of them are just fancy prompts.

They look smart in demos. They fall apart in real use.

Why?

Because they forget everything.

The Problem Nobody Talks About

Let’s be blunt.

Most AI systems today are stateless.

You ask something.

It answers.

Next question? Clean slate.

It answers.

Next question? Clean slate.

No memory. No learning. No continuity.

That’s fine for one-off tasks.

But the moment you try to use AI for real work like research, coding, marketing, customer support, it breaks.

It keeps repeating the same thinking.

Re-solving the same problems.

Re-learning what it already “knew” yesterday.

Re-solving the same problems.

Re-learning what it already “knew” yesterday.

That’s not intelligence.

That’s expensive autocomplete.

What Andrew Ng’s Course Gets Right

The Agent Memory: Building Memory-Aware Agents (from DeepLearning.AI, introduced by Andre Ng, taught by Richmond Alake and Nacho Martínez) frames this shift very clearly:

Memory is what turns an LLM into an agent that improves over time.

And that sounds simple. But it’s actually a massive architectural shift.

The Core Idea: The Brain Outside the Skull

LLMs have a hard limit. Context window.

You can’t just keep stuffing everything into prompts.

So what do you do?

You move memory outside the model.

Think of it like this:

- LLM = reasoning engine

- Memory system = long-term brain

That external memory could be:

- A database

- A vector store

- A structured knowledge base

But the key is this:

👉 The agent decides what to remember and what to recall

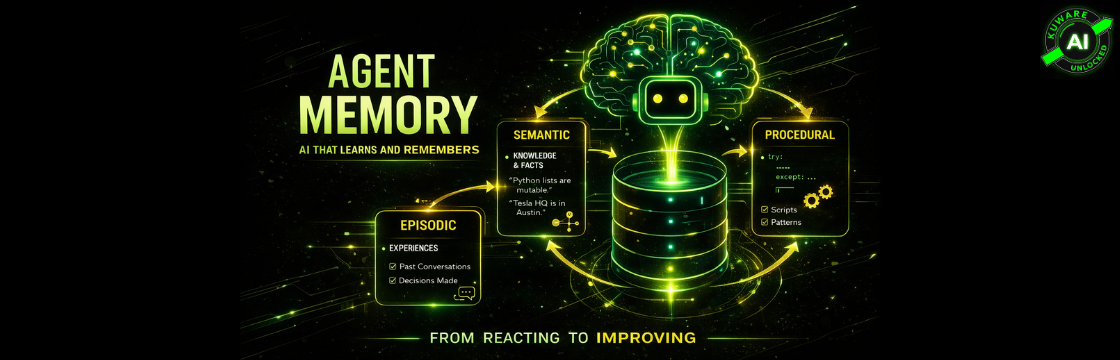

The Three Types of Memory (This Actually Matters)

This is one part of the course that’s more important than it looks at first glance.

1. Semantic Memory

Facts. Pure knowledge.

- Python lists are mutable

- Tesla HQ is in Austin

- Google Ads CPC trends matter

Stored as embeddings. Retrieved via similarity.

This is your knowledge layer.

2. Episodic Memory

What happened.

- Conversations

- Decisions

- Outcomes

Think of it as a diary.

Your agent remembers:

- What it tried

- What worked

- What failed

This is where continuity comes from.

3. Procedural Memory

How to do things.

- Workflows

- Scripts

- Patterns

This is the most underrated one.

Because this is where the agent stops being reactive and starts being efficient.

It doesn’t just answer.

It executes.

The Missing Piece: The Memory Manager

This is the real system. Not the LLM.

The Memory Manager is doing three things constantly:

- Read Pull only what’s relevant Not everything

- Write Save useful outcomes Not noise

- Store Keep it structured and retrievable

If you don’t have this layer, you don’t have memory.

You just have storage.

The Loop That Changes Everything

Once memory is in place, your agent stops being a one-shot responder.

It becomes a loop:

User Input

→ Retrieve Memory

→ Reason

→ Act

→ Write Back

That last step is the game changer.

Because now the system learns.

Not theoretically. Practically.

A Simple Example (But This Is Where It Clicks)

Let’s say you have a research agent.

Day 1:

- Scrapes papers

- Summarizes findings

- Stores insights

Day 2:

- Loads previous knowledge

- Skips duplicates

- Builds on prior work

That’s not just automation anymore.

That’s compounding intelligence.

The Part Most People Get Wrong

Everyone gets excited about storing memory.

Nobody thinks hard enough about what not to store.

And this is where systems break.

Memory Bloat

If you store everything:

- Retrieval slows down

- Prompts get polluted

- Signal gets buried in noise

Memory Drift

If you don’t clean memory:

- Old assumptions stick

- Wrong conclusions persist

- The agent gets worse over time

The Hard Problem: Forgetting

The course touches on this. But in practice, this is the real challenge.

Good agents don’t just remember.

They forget intelligently.

You need:

- Importance scoring

- Summarization pipelines

- Consolidation (episodic → semantic)

- Deletion of low-value data

Otherwise your “smart” system turns into a cluttered mess.

Why This Matters for Real Businesses

Now let’s bring this into your world.

Because this is where it gets interesting.

Marketing Systems

Imagine an agent that:

- Remembers campaign performance

- Learns which ads convert

- Adjusts recommendations automatically

That’s not a tool.

That’s a system with experience.

Customer Support

Instead of:

- Repeating answers

- Asking the same questions

You get:

- Context-aware responses

- Personalization

- Faster resolution

Internal AI (This is the Big One)

Your internal tools become:

- Smarter every day

- Aligned with your workflows

- Harder to replace

Because the value is not in the model.

It’s in the memory.

The Real Insight (This Is the Shift)

Most people think AI progress is about better models.

It’s not.

It’s about better systems around the model.

And memory is the most important part of that system.

Final Thought

If I had to say it in one line:

Without memory, AI is just reacting.

With memory, AI starts improving.

With memory, AI starts improving.

And once it improves…

You’re no longer building tools.

You’re building assets.