Agentic AI vs Non-Agentic AI: What Business Leaders Need to Understand Before the Next Wave of AI

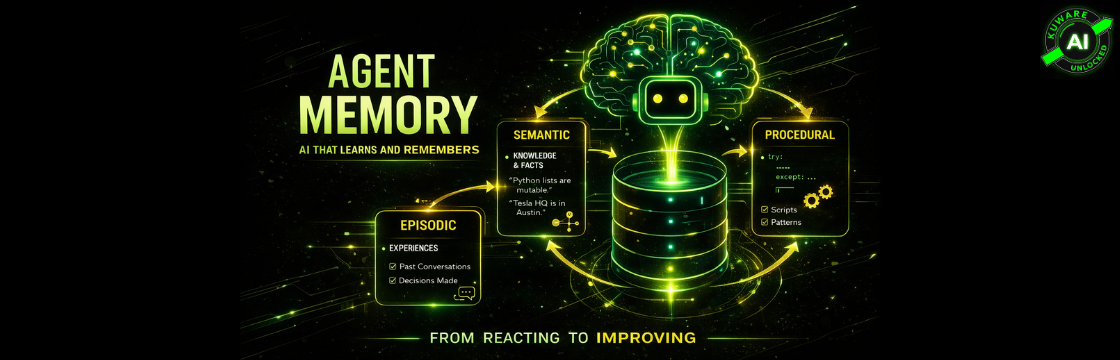

Agentic AI, unlike reactive non-agentic systems, pursues goals autonomously by planning, using tools, remembering context, and adapting strategies. This shift from intelligent response to intelligent execution is critical for businesses looking to automate complex workflows and gain competitive advantage in efficiency and scalability.